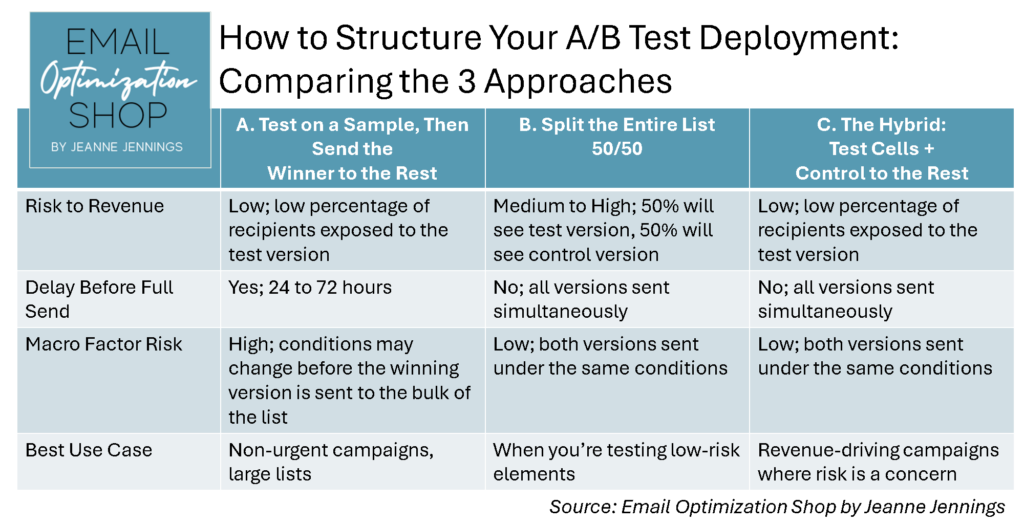

There’s more than one “right way” to structure an A/B split test. But there are definitely better ways depending on your goals, your risk tolerance, and how much revenue is riding on your send.

I created this chart to help students in my ‘More Effective A/B Split Testing‘ workshop — and now you — get clarity on the three most common ways to structure a test, and which one to choose depending on the situation.

Let’s walk through them together.

1. Test on a Sample, Then Send the Winner to the Rest

This method is great if you have a large list and a little time to play with.

You send the test to a small portion of your audience (often 10% to 20% depending on the sample size you estimate you need to get results) split evenly between your control and test versions. Once the results are in, you send the winner to the rest of your list.

Pros:

- Minimizes risk (only a small group sees the test version)

- Ideal for big audiences where you still want to protect performance

Cons:

- You have to wait, usually 24 to 72 hours, for results

- If the inbox landscape changes during that time, your “winning” version might not hold up

Use this method when you’re running evergreen content or have a long promotional window. And when you’re OK with some delay before the main send goes out.

2. Split the Entire List 50/50

This is the simplest and fastest approach. You just divide your audience in half and send both versions at the same time.

Pros:

- Everyone gets the email at the same time, under the same conditions

- In 24 to 72 hours you know the full final results of the send

Cons:

- Half your list gets the “losing” version, which could impact performance

- Riskier if you’re testing something big, like a radically different offer or creative concept

This works well for lower-stakes tests: subject lines, image swaps, or copy tweaks. If you can afford to sacrifice a little performance in exchange for speed and simplicity, go for it.

3. The Hybrid: Test Cells + Control to the Rest

This is my go-to method when there’s revenue on the line and we want to test without taking on too much risk.

Here’s how it works:

- You pull out two small test cells (e.g., 10% each, based on your estimated sample size)

- You send the control to your full list and to Test Cell A

- You send the test version to Test Cell B

Now you’ve got a valid test running between your test cells… and you’ve protected your overall results by keeping most of the list on the proven control.

Pros:

- Real-world comparison under the same conditions

- The bulk of your list sees the control, so you protect performance

- No delay required; you send everything at once

Cons:

- Requires slightly more setup (but worth it, IMO)

- You’ll need enough volume in your test cells to reach statistical significance

This structure is perfect for ecommerce and B2B marketers who want to test, but can’t afford to “lose” half their audience on a risky idea.

Final Thought: It’s About Strategy, Not Just Structure

When you pick a test structure, think about more than just convenience. Ask yourself:

- How big is the risk if one version underperforms?

- How much time do I have before this needs to go out?

- Will my test cells give me reliable data, or just noise?

No matter which method you use, A/B testing should feel like a tool to help you learn, not a gamble you’re afraid to take.

Got a test idea you want to run past me? Or need help estimating your sample size, setting up your test cells, and/or measuring statistical significance? Let’s talk.

Until next time,

jj

Photo by Alain Pham on Unsplash